Figuring out how often claims happen is a big deal in the insurance world. It’s not just about how much a claim costs, but how many claims we’re talking about. This is where frequency trend modeling insurance comes into play. It helps insurers get a handle on these patterns, which is super important for setting prices and making sure the whole system stays afloat. We’re going to look at why this is so important and how it’s done.

Key Takeaways

- Understanding how often claims occur is as vital as knowing their cost for setting insurance prices. This is the core of frequency trend modeling in insurance.

- Historical data and smart analytics, including machine learning, are key tools for building accurate frequency models. Breaking down risks into smaller groups also helps.

- Many things can shift claim frequency, from people’s habits and economic changes to weather events and new technologies. Keeping an eye on these influences is ongoing work.

- Advanced math and statistical methods, like generalized linear models and time series analysis, help insurers create more precise frequency trend models for insurance.

- Effective frequency modeling directly impacts how insurers classify risks, set underwriting rules, and decide on things like deductibles, all to better manage potential losses.

Understanding Frequency Trend Modeling in Insurance

When we talk about insurance, a big part of figuring out prices involves looking at how often claims happen. This is what we call frequency. It’s different from how much those claims cost, which is called severity. Think about car insurance: you might have a lot of small fender benders (high frequency, lower severity) but also the occasional major crash (lower frequency, high severity). Understanding these patterns helps insurers set their base rates. These rates are like the starting point before any specific discounts or extra charges are applied based on individual risks.

It’s really important to separate frequency from severity because they tell different stories about risk. For example, a policy might have a low chance of a claim happening, but if it does, it could be very expensive. Or, claims might happen often, but they’re usually minor.

Here’s a quick look at how frequency and severity can differ:

- High Frequency, Low Severity: Think of routine maintenance claims or minor traffic accidents. Lots of them happen, but the cost per claim is usually manageable.

- Low Frequency, High Severity: This is more like major natural disasters or large-scale liability lawsuits. They don’t happen often, but when they do, the financial impact can be huge.

- High Frequency, High Severity: This is the worst-case scenario, though less common. It could involve something like widespread product defects leading to numerous costly claims.

- Low Frequency, Low Severity: These are minor, infrequent events that might not even warrant a claim.

Modeling frequency helps insurers predict future claim volumes. This prediction is key for making sure they have enough money set aside to pay out claims and stay financially stable. It’s a core part of how insurance works as a financial risk allocation mechanism.

Accurately forecasting claim frequency is not just about setting prices; it’s about managing the overall financial health of the insurance operation. It allows for better planning and resource allocation, ultimately benefiting policyholders through more stable premiums and reliable coverage.

This understanding is fundamental for setting appropriate premiums and managing the overall risk portfolio. It’s a core part of actuarial science and how insurers assess risk. The goal is to make sure that the premiums collected are enough to cover the expected claims and expenses, while also allowing for a reasonable profit. This process is closely watched by regulators to ensure that rates are fair and justified. Rate approval and regulatory scrutiny is a big part of the insurance world.

Data-Driven Approaches to Frequency Modeling

When we talk about modeling frequency trends in insurance, we’re really looking at how often claims are likely to happen. It’s not about how much each claim will cost (that’s severity), but the sheer number of them. This is a core part of setting insurance prices.

Leveraging Historical Claims Data

Insurers have mountains of historical claims data. This isn’t just random information; it’s a goldmine for understanding past patterns. By digging into this data, we can see how often certain types of events occurred, who was involved, and where they happened. This helps us build a baseline for what to expect in the future. For example, looking at auto claims data might show that claims involving younger drivers in urban areas tend to happen more frequently than with older drivers in rural settings. This kind of insight is fundamental for assessing risk.

Here’s a simplified look at how we might break down historical data:

- Policy Type: Auto, Home, Business, etc.

- Claim Year: The year the claim occurred.

- Frequency Count: Number of claims in that year for that policy type.

- Key Demographics: Age, location, vehicle type (for auto), property type (for home).

Analyzing past claims isn’t just about looking backward; it’s about building a foundation for predicting what might happen next. The more detailed and accurate the historical records, the better our initial models will be.

Predictive Analytics and Machine Learning

Beyond just looking at past numbers, we can use more advanced tools. Predictive analytics and machine learning algorithms can find complex patterns in the data that might not be obvious to the human eye. These tools can process vast amounts of information, identifying subtle correlations between different factors and claim frequency. Think of it like a super-smart detective that can connect seemingly unrelated clues. This allows for a much more granular understanding of risk.

Some common techniques include:

- Regression Analysis: To understand the relationship between different variables and claim frequency.

- Decision Trees: To create rule-based systems for predicting risk.

- Neural Networks: For identifying highly complex, non-linear patterns.

The Importance of Granular Risk Segmentation

Simply looking at broad categories isn’t enough anymore. We need to get down to the specifics. Granular risk segmentation means breaking down policyholders into very small, specific groups based on their unique characteristics. Instead of just ‘drivers,’ we might look at ‘drivers aged 18-25 in zip code X who drive a compact car for commuting purposes.’ This level of detail allows us to tailor our frequency models much more precisely. It helps us understand that not all risks within a larger group are the same, leading to fairer pricing and better risk management overall.

Factors Influencing Frequency Trends

When we talk about insurance, the frequency of claims is a big deal. It’s basically how often people tend to file a claim for a specific type of risk. Think about it – if a certain event happens more often, the insurance company has to be prepared to pay out more claims. Several things can make these frequencies go up or down over time. It’s not just random; there are real-world reasons behind it.

Socioeconomic and Behavioral Influences

How people live and behave really impacts claim frequency. For instance, changes in the economy can affect things. If people have less money, they might be less likely to maintain their property, which could lead to more claims down the line. Also, shifts in lifestyle matter. More people working from home might change car insurance claims, for example. We also see trends in things like theft or vandalism, which can be tied to social factors. Understanding these human elements is key to predicting future claim numbers. It’s about looking at demographics, income levels, and even cultural shifts that might make certain risks more or less common. For example, a rise in distracted driving, sadly, often leads to more auto accidents.

Environmental and Catastrophic Events

Nature can certainly throw a wrench into frequency models. We’ve seen a definite increase in the frequency and intensity of natural disasters like hurricanes, floods, and wildfires. These aren’t just isolated incidents anymore; they seem to be happening more regularly. This means insurers need to adjust their expectations for how often claims related to these events will occur. It’s not just about the big, dramatic events either. Even smaller, more localized weather events, like severe hailstorms, can contribute to a higher claim count over time. Climate change is a major driver here, making historical data less reliable for predicting future patterns.

Technological Advancements and New Risks

Technology is a double-edged sword when it comes to insurance claims. On one hand, new tech can help prevent losses. Think of advanced security systems for homes or better safety features in cars. These can lower claim frequencies. However, technology also creates entirely new risks. Cyberattacks are a prime example. As businesses and individuals rely more on digital systems, the potential for data breaches and cyber fraud grows, leading to new types of claims that weren’t even a consideration a decade ago. We also see advancements in areas like drone technology or the increasing use of electric vehicles, each bringing its own set of potential risks and claim scenarios that need careful modeling. It’s a constant race to keep up with how innovation changes the risk landscape.

The interplay between human behavior, environmental shifts, and technological evolution creates a dynamic environment for insurance. Predicting claim frequency requires a multifaceted approach that considers these interconnected factors, moving beyond simple historical analysis to incorporate forward-looking assessments of societal and environmental trends. This adaptability is vital for maintaining accurate pricing and adequate reserves.

Advanced Modeling Techniques for Frequency

Generalized Linear Models in Actuarial Science

When we talk about modeling claim frequency, especially in insurance, Generalized Linear Models (GLMs) are a big deal. They’re really useful because they let us look at how different factors, like the age of a driver or the type of building, might influence how often a claim happens. Unlike simpler models, GLMs can handle the fact that claim counts are usually non-negative integers and often have a skewed distribution. This means they’re a good fit for the kind of data we see in insurance.

Think about it: you can’t have -1 claims, right? And most policies don’t have a massive number of claims each year, but a few might. GLMs, often using a Poisson or Negative Binomial distribution, can capture this. We can include things like policy limits and deductibles as part of the model, too. The key is that GLMs allow us to model the expected number of claims based on a set of predictor variables, providing a flexible framework for understanding frequency drivers. This is a step up from just looking at averages. We can also use them to predict future claim counts, which is pretty important for setting rates. It’s all about getting a more accurate picture of risk.

Bayesian Methods for Frequency Estimation

Bayesian methods offer another powerful way to approach frequency modeling, especially when we want to incorporate prior knowledge or deal with situations where data might be a bit sparse. The core idea here is that we start with a ‘prior belief’ about the frequency distribution, and then we update that belief using the actual data we’ve collected. This is different from traditional frequentist approaches, which rely solely on the observed data.

One of the main advantages of Bayesian methods is their ability to handle uncertainty. They provide a full probability distribution for the estimated frequency, not just a single point estimate. This means we get a range of possible outcomes, which can be really helpful for risk management. For example, if we’re looking at a new type of risk or a very niche market, we might not have a lot of historical data. A Bayesian approach lets us combine what little data we have with expert opinion or information from similar risks. This can lead to more stable and reliable estimates, especially when dealing with low-frequency, high-severity events where historical data is inherently limited. It’s a way to be more robust in our predictions.

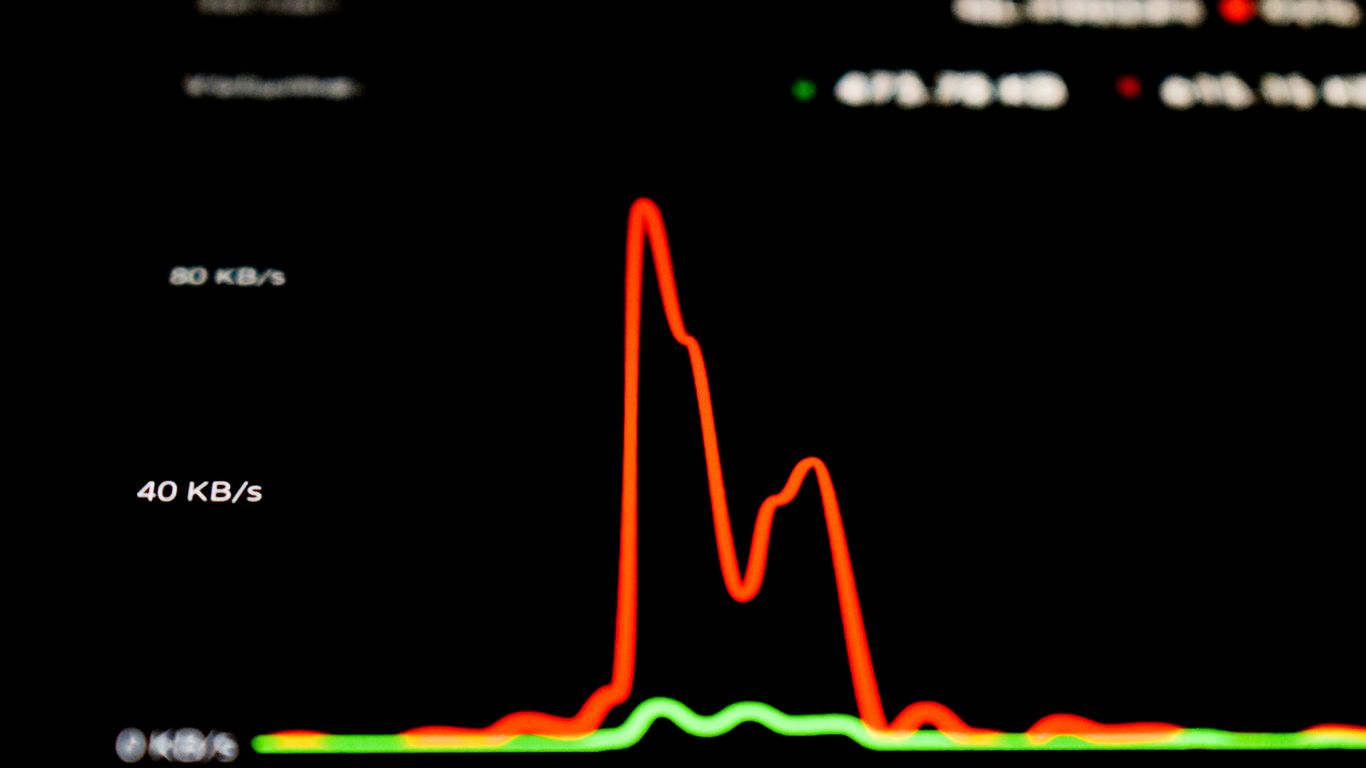

Time Series Analysis for Trend Identification

Time series analysis is all about looking at data points collected over time to identify patterns, trends, and seasonality. In insurance, this is super useful for understanding how claim frequencies change year over year. We’re not just looking at a snapshot; we’re watching how things evolve.

For instance, we might see that the frequency of auto claims has been steadily decreasing over the last decade, perhaps due to advancements in vehicle safety or changes in driving habits. Or maybe the frequency of certain types of property claims is increasing due to more extreme weather events. Time series models, like ARIMA (AutoRegressive Integrated Moving Average) or exponential smoothing, can help us quantify these trends. They can also help us forecast future frequencies, assuming that past patterns will continue to some extent. It’s important to remember that these models often assume some level of stability in the underlying processes, so we need to be mindful of external factors that could disrupt these trends. Identifying these shifts early is key for insurers to adjust their pricing and reserving strategies effectively. It helps us stay ahead of the curve.

Here’s a quick look at what we might analyze:

- Trend: The long-term direction of the data (e.g., increasing, decreasing, or stable frequency).

- Seasonality: Regular patterns that repeat over a fixed period (e.g., higher claim frequency in winter months for certain types of insurance).

- Cyclicality: Longer-term fluctuations that are not of a fixed period, often related to economic conditions.

- Irregularity/Noise: Random variations that cannot be explained by the other components.

Understanding these temporal dynamics is vital. It allows insurers to move beyond static risk assessments and adopt a more dynamic approach to pricing and reserving, acknowledging that the risk landscape is not fixed but constantly shifting. This proactive stance is essential for long-term financial health and competitiveness in the insurance market.

Integrating Frequency Models into Underwriting

Frequency models are not just abstract actuarial tools; they directly influence how insurance policies are written and priced. When we talk about underwriting, we’re really talking about the process of assessing and selecting risks. This is where the rubber meets the road for insurers, deciding who gets coverage and at what cost. The goal is to create a balanced portfolio that is both profitable and sustainable.

Risk Classification and Frequency Assessment

Underwriters use frequency data to sort applicants into different risk categories. Think about it: a driver with a history of speeding tickets is likely to have a higher claim frequency than someone with a clean record. This historical data, combined with predictive analytics, helps classify risks more accurately. It’s not just about individual history, though. We also look at broader trends. For instance, certain geographic areas might experience more frequent weather-related claims, which would influence the classification of properties in those zones.

Here’s a simplified look at how frequency might influence classification:

| Risk Factor | Low Frequency | Medium Frequency | High Frequency |

|---|---|---|---|

| Driving Record | Clean | Minor violations | Multiple tickets/accidents |

| Property Location | Low hazard | Moderate hazard | High hazard (flood/wildfire) |

| Business Type | Low exposure | Moderate exposure | High exposure (e.g., construction) |

Underwriting Guidelines and Frequency Parameters

Every insurance company has underwriting guidelines. These are essentially the rules that underwriters follow. They often include specific parameters related to frequency. For example, a guideline might state that policies with an expected claim frequency above a certain threshold require additional review or may not be eligible for standard coverage. These guidelines are shaped by the insurer’s overall risk appetite and regulatory requirements. They help ensure consistency across the underwriting team, but experienced underwriters can often make exceptions based on unique circumstances. It’s a balance between following the rules and applying professional judgment. Insurance agents are often the first point of contact, gathering much of the initial information that feeds into this assessment.

The Role of Deductibles in Managing Frequency

Deductibles are a powerful tool for managing claim frequency. When a policyholder agrees to pay a certain amount out-of-pocket before the insurance kicks in, it changes their behavior. They’re more likely to be careful and avoid small, frequent claims because they bear the initial cost. A higher deductible generally means a lower premium, reflecting the reduced expected claim costs for the insurer. It’s a trade-off for the policyholder: pay less upfront for coverage, but be prepared to cover more if a loss occurs. This self-retention encourages a more risk-conscious approach, which benefits everyone involved by keeping overall claim costs down. The underwriting process considers how deductibles can align policyholder incentives with the insurer’s goals.

The interplay between frequency models and underwriting is dynamic. As data improves and analytical techniques become more sophisticated, the ability to predict and manage claim frequency gets better. This, in turn, allows for more precise risk classification, tailored underwriting guidelines, and more effective use of tools like deductibles to create a stable and fair insurance market.

Challenges in Frequency Trend Modeling

Modeling frequency trends in insurance isn’t always straightforward. There are a few hurdles that can make things tricky.

Data Quality and Availability Issues

One of the biggest headaches is getting good data. Sometimes, the historical claims data we have just isn’t complete or accurate enough. Maybe records are missing, or there are inconsistencies in how information was entered over the years. This can really mess with the patterns we’re trying to spot. Plus, for newer types of risks or in less developed markets, we might just not have enough data to build a reliable model.

- Incomplete or missing claim records.

- Inconsistent data entry practices over time.

- Lack of historical data for emerging risks.

- Geographic variations in data collection standards.

The accuracy of any frequency model is directly tied to the quality and completeness of the underlying data.

Model Transparency and Explainability

Another challenge is making sure our models make sense. Some advanced techniques, like complex machine learning algorithms, can be like black boxes. We might get a prediction, but it’s hard to explain exactly why the model came up with that number. This is a problem because actuaries and regulators need to understand the reasoning behind pricing and reserving decisions. If we can’t explain it, it’s harder to trust and get approval for.

Adapting to Evolving Risk Landscapes

Finally, the world keeps changing, and so do the risks. Things like climate change, new technologies, and shifts in societal behavior can all impact how often claims occur. Our models need to be flexible enough to keep up. A model that worked perfectly five years ago might be totally out of date today because the underlying risk factors have shifted. We’re constantly playing catch-up to make sure our predictions reflect the current reality and what might happen next.

The Impact of Climate Change on Frequency

Climate change is really shaking things up for insurance companies, especially when it comes to how often claims happen. We’re seeing more extreme weather events, like bigger storms and longer droughts, which directly leads to more property damage and other losses. This isn’t just a theoretical problem; it’s a tangible shift that makes predicting future claim numbers much harder.

Increasing Frequency of Natural Catastrophes

Think about it: hurricanes seem to be getting stronger, wildfires are burning larger areas, and floods are becoming more common in places that never used to flood. These aren’t isolated incidents anymore. They’re becoming more frequent and more intense. This means that coverages for things like wind, hail, and water damage are being hit much harder and more often than historical data might suggest. It’s a direct challenge to how we’ve always modeled risk.

- More frequent severe thunderstorms

- Increased wildfire activity in new regions

- Higher incidence of coastal flooding

- Prolonged periods of drought impacting agriculture

Strain on Traditional Risk Models

Our old ways of calculating risk, which relied heavily on past patterns, are struggling to keep up. When the past isn’t a reliable guide to the future, our models can become outdated pretty quickly. This puts a lot of pressure on actuaries and underwriters to find new ways to assess risk. We need to look at forward-looking data and climate projections, not just what happened last year or the year before. It’s a big shift from just looking at historical claims data to understanding future trends.

The unpredictability introduced by climate change means that insurers must constantly re-evaluate their assumptions about how often certain events will occur. This requires a more dynamic approach to modeling and pricing.

Developing Climate-Resilient Insurance Strategies

So, what’s the answer? Insurers are looking at a few things. One is adjusting premiums to reflect the new reality of higher claim frequencies. Another is encouraging policyholders to take steps to reduce their risk, like making homes more resistant to wind or floods. We’re also seeing a push for new types of insurance products that can better handle these evolving risks. It’s all about building a more robust system that can withstand the impacts of a changing climate, ensuring that term life insurance and other coverages remain available and affordable.

Regulatory Considerations in Frequency Modeling

When we talk about modeling frequency trends in insurance, we can’t just ignore what the regulators are looking at. It’s not just about building the most accurate model possible; it’s also about making sure that model fits within the rules and expectations set by governing bodies. This is a big deal because getting it wrong can lead to all sorts of problems, from fines to having your rates rejected.

Rate Approval and Regulatory Scrutiny

One of the main areas where frequency modeling meets regulation is in rate approval. Insurers need to show that their proposed rates are fair, adequate, and not discriminatory. This means the actuarial justification behind those rates, including the frequency assumptions, has to be solid. Regulators will look closely at the data used, the methodologies applied, and the overall logic. They want to see that the expected frequency of claims is properly accounted for and that the pricing reflects the risk accurately. If a model suggests a significant shift in frequency, like a sudden increase in auto claims due to new driving behaviors, the insurer needs to have strong evidence to back it up before they can adjust rates accordingly. This process can be quite involved, often requiring detailed filings and explanations. It’s a key part of ensuring insurer stability.

Data Privacy and Algorithmic Fairness

As models get more sophisticated, especially those using machine learning, concerns about data privacy and algorithmic fairness become more prominent. Regulators are increasingly focused on how personal data is collected, used, and protected. When building frequency models, especially those that might segment risks very finely, insurers must be careful not to inadvertently discriminate against certain groups. This means ensuring that the variables used in the model are directly related to risk and are not proxies for protected characteristics. For example, using zip codes might seem like a reasonable proxy for certain risks, but if it disproportionately affects minority groups, it could raise fairness issues. Transparency in how these models work is also becoming more important, so regulators can assess them for bias. This is a complex area that requires careful attention to both technical accuracy and ethical considerations, especially when dealing with consumer protection.

Ensuring Solvency Through Accurate Forecasting

Ultimately, regulators are tasked with making sure insurance companies remain solvent and can pay claims. Frequency modeling plays a direct role in this. If an insurer underestimates claim frequency, it could lead to inadequate reserves and financial distress. Accurate forecasting of frequency trends is therefore not just a pricing exercise; it’s a critical component of financial stability. Regulators will examine an insurer’s reserving practices and capital adequacy, which are directly influenced by their loss frequency projections. Models that can reliably predict future claim occurrences help insurers maintain sufficient capital to weather unexpected events and fulfill their obligations to policyholders. This focus on solvency is a core responsibility of state insurance departments and is a primary reason for the detailed rate approval process and oversight.

Future Directions in Frequency Modeling

The way we model claim frequency is always changing, and there are some exciting new paths opening up. We’re seeing a big shift towards more dynamic and personalized approaches, moving away from static, one-size-fits-all methods. This is partly driven by new technologies and a better understanding of how different factors influence risk.

The Rise of Insurtech and Innovation

Insurtech companies are really shaking things up. They’re built on technology from the ground up, which lets them experiment with new ways to look at data and build models. Think about how they’re using AI and machine learning not just to predict frequency, but to do it in near real-time. This allows for much quicker adjustments to pricing and underwriting. They’re also great at creating user-friendly digital platforms, making the whole insurance process smoother for customers. Traditional insurers are definitely paying attention and often partnering with these innovative firms to bring these advancements into their own operations. It’s a good way to blend established knowledge with fresh tech capabilities.

Usage-Based and Parametric Insurance Models

We’re also seeing a move towards insurance models that are more directly tied to actual behavior or specific events. Usage-based insurance, for example, uses telematics data to track how people actually drive. If you’re a safe driver, your premiums might go down. This is a big change from just looking at general demographics. Then there’s parametric insurance. This type of coverage pays out when a specific, measurable event occurs, like an earthquake of a certain magnitude or a hurricane reaching a particular wind speed. It doesn’t wait for a traditional claims adjuster to assess damage. This can speed up payouts significantly, especially after a major disaster. These models require a lot of good data and clear communication with customers about how they work, but they offer a lot of flexibility. The goal is to align premiums more closely with individual risk profiles and provide faster financial relief when it’s needed most. This is a significant departure from older insurance structures and requires robust data governance. Learn about risk.

The Evolving Role of Data Scientists

As all these new technologies and models come into play, the role of data scientists in insurance is becoming even more important. They’re not just crunching numbers anymore; they’re building complex predictive models, working with AI, and trying to make sense of vast amounts of data. This means insurers need to invest in training and hiring people with these specialized skills. It’s not just about having the technology; it’s about having the right people to use it effectively. They need to understand not only the math and the tech but also the insurance business itself. This blend of skills is key to developing models that are accurate, fair, and compliant with regulations. The future workforce will definitely look different, with a growing demand for these tech-savvy professionals. It’s a constant effort to keep up with the pace of change and make sure our models are as good as they can be. This also means that claim reserve adequacy needs to be monitored closely by these professionals as claim reserves are adjusted.

Operationalizing Frequency Insights

So, you’ve spent a lot of time and effort building these sophisticated models to predict how often claims might happen. That’s great, but what do you actually do with that information? It’s not much use sitting in a report, right? The real value comes when you put those insights to work across the business. This is where operationalizing frequency trends really matters.

Streamlining Claims Processing with Data

Think about the claims department. When a claim comes in, the first thing adjusters need to do is figure out what happened and if it’s covered. Using the data and models you’ve developed, you can start to flag claims that look like they might be more frequent or have certain characteristics. This helps speed things up. For example, if a claim matches a pattern known for being straightforward, it can be fast-tracked. This means less time spent on simple cases and more time for complex ones. It’s all about making the process smoother and quicker for everyone involved. We can use claims data analytics to understand patterns and speed up handling [6a7f].

Enhancing Fraud Detection Capabilities

Fraud is a big headache in insurance, and it often relates to how often claims are filed or the circumstances around them. By looking at frequency trends, you can spot unusual patterns that might suggest fraud. Maybe a certain type of claim is suddenly happening way more often than it should, or perhaps a policyholder is filing claims that don’t quite fit the usual story. Your models can act as an early warning system, flagging these suspicious activities for a closer look by specialized teams. This helps protect the company and, ultimately, honest policyholders. Detecting fraud is crucial for fairness [d7df].

Informing Product Development and Pricing Strategies

Finally, those frequency insights are gold for product development and pricing. If your models show that a particular risk is becoming more frequent, you might need to adjust your pricing for that risk. Or, perhaps you can design a new product that specifically addresses this emerging trend. Maybe you offer discounts for policyholders who take steps to reduce the likelihood of these frequent claims. It’s about being proactive and making sure your products and prices accurately reflect the real-world risks people face today.

- Pricing Adjustments: Modify premiums to reflect changing frequency.

- Product Innovation: Create new offerings for emerging risks.

- Risk Mitigation Incentives: Encourage policyholder actions to lower claim frequency.

The goal is to move from simply reacting to claims to actively shaping the risk landscape through informed decisions based on predictive insights. This proactive stance is key to long-term success in a dynamic environment.

Looking Ahead

So, we’ve covered a lot about how insurance companies figure out what to charge and how they manage risk. It’s not just guesswork; there’s a whole system involving data, trends, and smart planning. Things like how often claims happen versus how much they cost, and even how people behave, all play a part. Plus, with new tech and big changes like climate events, the way insurance works is always shifting. Staying on top of these trends is key for everyone involved, from the companies themselves to the people buying policies. It’s a complex but important area that keeps evolving.

Frequently Asked Questions

What is frequency in insurance?

Frequency in insurance is like asking how often something bad might happen. For example, how many times a car might get into a fender bender in a year, or how often a house might have a small leak. It’s about how often claims are expected to pop up.

How is frequency different from severity?

Frequency is about how often claims happen, while severity is about how much each claim costs. Think of it this way: getting a flat tire (high frequency, low severity) is different from a house burning down (low frequency, very high severity).

Why do insurance companies care about frequency?

Insurance companies need to know how often claims might happen to figure out how much to charge for insurance (the price or ‘base rate’). If claims happen a lot, they need to collect more money to pay for them all.

What kind of things can change how often claims happen?

Lots of things! Things like how people behave (driving more carefully or less), changes in the weather (more storms), or even new technology can affect how often claims occur. It’s a moving target!

Can insurance companies predict future claim frequency?

They try their best! They use past claims data and smart computer programs (like AI and machine learning) to make educated guesses about what might happen in the future. It’s not perfect, but it helps them prepare.

How does climate change affect claim frequency?

Climate change can make bad weather events, like hurricanes or floods, happen more often and be more severe. This means insurance companies might see more claims related to these natural disasters than they used to.

What are deductibles and how do they relate to frequency?

A deductible is the amount you pay out-of-pocket before insurance kicks in. When you have a deductible, you might be less likely to file small claims, which can help lower the overall ‘frequency’ of claims for the insurance company.

What’s the hardest part about predicting claim frequency?

It can be tough because the world is always changing! Getting good, reliable data can be tricky, and sometimes it’s hard to explain exactly why a computer model made a certain prediction. Plus, new risks we haven’t seen before can pop up.